Rather than direct convolution, it is faster to use an FFT and do the filtering in frequency space.

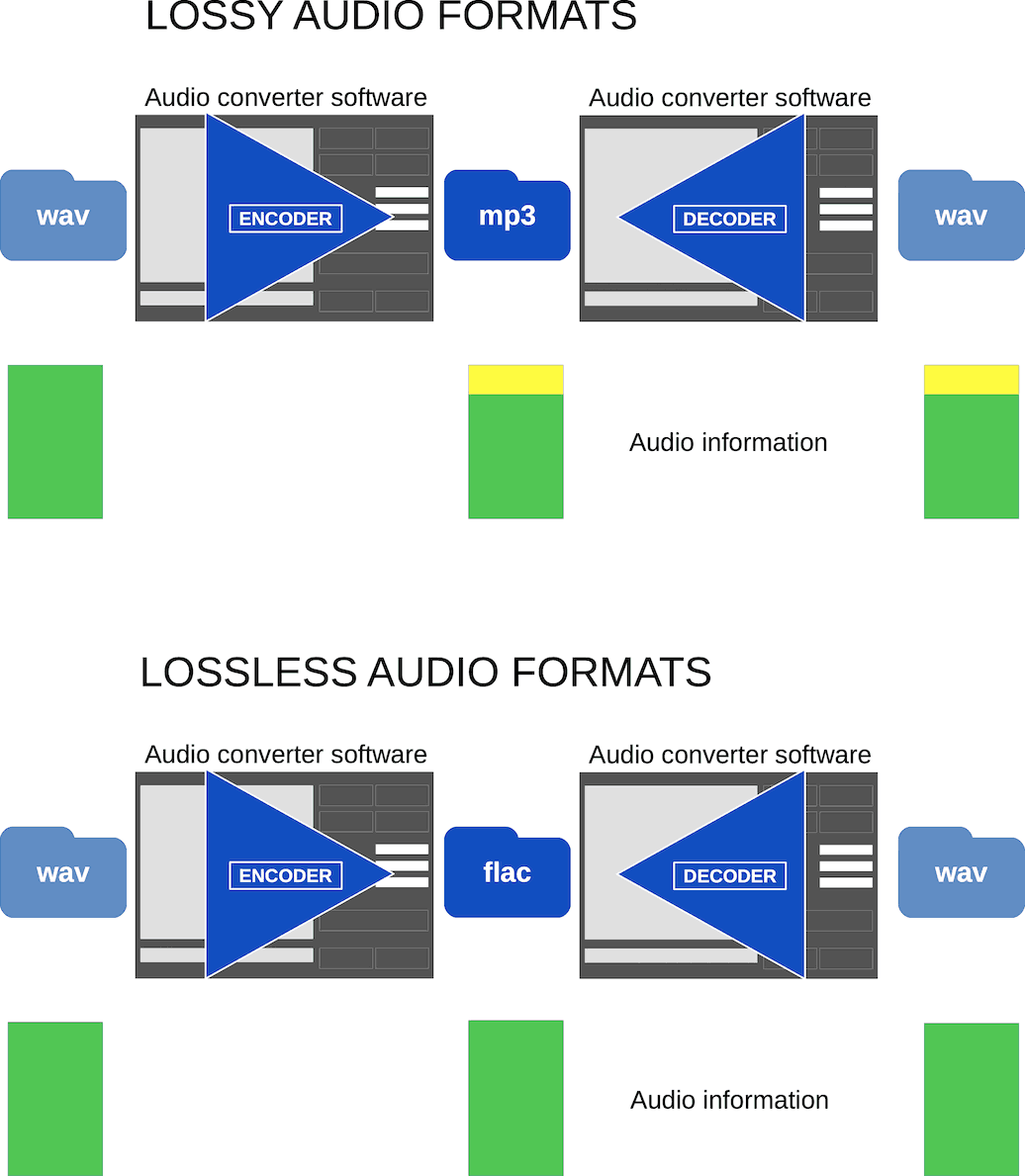

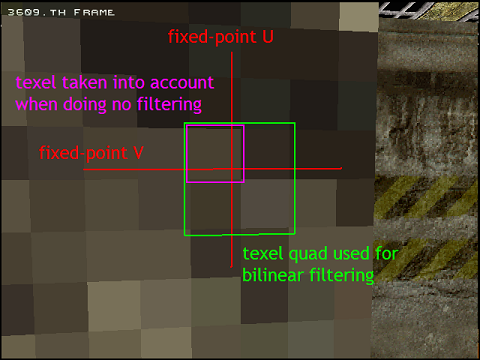

First, a straight sinc filter is unbounded, and doesn't drop off very fast this means that doing a straightforward convolution will be very slow. And, in theory, this response is achievable: as you may know, a straight sinc filter will give you exactly that. This means - in theory - that a good filter should be a "box" function in frequency space: with zero response for frequencies above the cutoff, unity response for frequencies below the cutoff, and a step function in between. Finally, you want to extinguish the unwanted frequencies as completely as possible. Also, you don't want to distort the signal you do preserve. While you're filtering out unwanted frequencies from the original, you want to preserve as much of the original signal as you can. Ultimately, the reason you need to use a filter at all is to avoid aliasing: if you are reducing the resolution, you need to filter out high-frequency original data that the new, lower resolution doesn't support, or it will be added to unrelated frequencies instead. Instead of a function of time (t), your signal is a function of one of the coordinate axes (say, x) everything else is exactly the same. This reduces the problem of resampling a (2-D) image to the problem of resampling a (1-D) signal. Since you are familiar with Fourier analysis for signal processing, you don't really need to know much more to apply it to image processing - all the filters of immediate interest are "separable", which basically means you can apply them independently in the x and y directions. That said, I will go ahead with some theory. There are a lot of good filters out there, and the choice between the best frequently comes down to a judgement call. I’ll look into the convolutionSeparable sample.The selection of a particular filter for image processing is something of a black art, because the main criterion for judging the result is subjective: in computer graphics, the ultimate question is almost always: "does it look good?". For integer types, these functions may optionally promote the integer to 32-bit floating point.Īlso, even if it supported bilinear filtering, trying to copy into a texture that’s less than 50% of the size of the original texture, you would not get correct filtering any more since it would only consider the 4 neighbouring texels when doing the bilinear blend. No texture filtering and addressing modes are supported. These functions fetch the region of linear memory bound to texture reference texRef using texture coordinate x. This is the first thing i looked into, but it seems that CUDA does not support any fancy texturing modes oddly enough. The problem here is that a) I’d have to convert back to integer, and B) it only does nearest neighbour filtering, so copying to a very small texture would lead to incorrect results. Simple linear interpolation is performed for one-dimensional textures and bilinear interpolation is performed for two-dimensional textures. When enabled, the texels surrounding a texture fetch location are read and the return value of the texture fetch is interpolated based on where the texture coordinates fell between the texels. It performs low-precision interpolation between neighboring texels. Linear texture filtering may be done only for textures that are configured to return floating-point data. I take that back - the docs are a bit unclear, it seems there is some basic bilinear and linear filtering supported. This won’t be well coalesced either, though. Wanted to get some feedback on what a good approach would be? I’m almost thinking I should rewrite it so that I run one thread per destination pixel, and do a gather-operation instead. I think what I need to do is allocate some shared memory and break this up into two passes.

It’s also going to be terrible for memory coalescing. The problem of course is this is completely not thread safe, many threads are reading-to and writing-from the same destination pixel. So in the above example we’d be taking 4 pixels, scaling one each by 0.25, and adding them together to produce the result pixel. dst image (ie: 8x8 to 4x4 is scale 2).įor each source pixel, divide by scale^2, and add to destination. The operation is quite simple:ĭetermine scale/ratio of src vs. While it does this it must do a sort of “bilinear filter” to average the pixels. I wrote a cuda program which takes an arbitrarily size RGBA image and copies into a smaller image.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed